Antarctica is a unique place from which to observe the universe. One antarctic night lasts 4 months! Astrophysicist Lifan Wang and the international team of researchers developing the telescopes and CSTAR observatory on Dome A in Antarctica are pioneers exploring the nature of the universe. Their telescopes peer deep in to space looking for exoplanets‚ variable stars and helping solve the mysteries of dark matter. Our interactive artwork is made from never before seen or heard data of the universe from one entire antarctic night! INSTRUMENT: One Antarctic Night is supported in part by the National Endowment for the Arts.

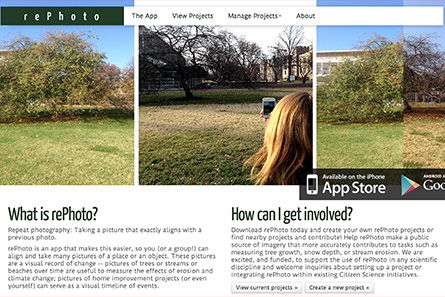

PROJECT REPHOTO

Available for iOS and Android devices, is an image capture application explicitly designed to support repeat photography — the process of taking a new image from exactly the same perspective as a previous image. In rePhoto this is made easier by showing the previous picture half see-through so that a new picture can be more accurately aligned. rePhoto is the result of an ongoing collaboration between researchers at University of Vermont, Washington University St. Louis, and University of North Texas.

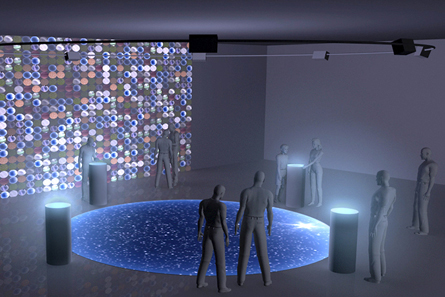

ATLAS IN SILICO

Reflects on one of the elemental scientific and cultural challenges of our time: the shift from an organism-centric to a sequence-centric view of nature made possible by metagenomics and its ensuing impact on our understanding of the nature, origins and unity of life. It is a physically interactive virtual environment/installation and art-science collaboration that provides a unique aesthetic encounter with metagenomics data (and contextual metadata) from the largest known protein sequence dataset, the Global Ocean Survey (GOS) – a ground-breaking snapshot of biodiversity in the world’s oceans.

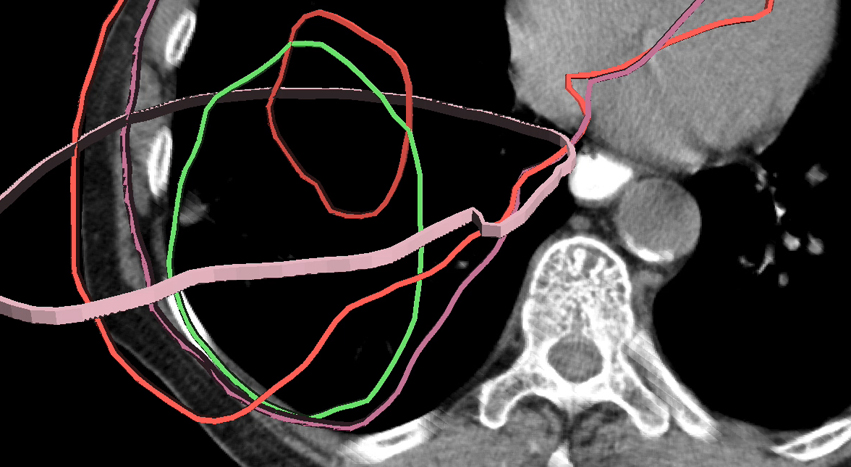

SPECIALIZED INTERFACES FOR SEGMENTATION DATA

A user experience and interfacedevelopment research focused on enabling experts and the general public (citizen scientists) to collaborate in the identification and segmentation of structures within time-varying volumetric data. We are designing methods for navigating within volumetric data and retaining a felt-sense of one’s spatial orientation within the volume, thus enabling users to know where they are within data volumes while utilizing non-parallel (oblique) views. Our twofold aim is to remove segmentation as a bottleneck to discovery and to enable citizen scientists to participate in bio-imaging research in ways that generate calibrated and validated data that has the potential to accelerate scientific discovery.

PEIR: PERSONALIZED ENVIROMENTAL IMPACT REPORT

A participatory sensing application that uses location data sampled from everyday mobile phones to calculate personalized estimates of environmental impact and exposure.

ECCE HOMOLOGY

An interactive installation that bridges art and science through the use of dynamic media, computer vision and computer graphics. Named after Friedrich Nietzsche’s Ecce Homo, a meditation on how one becomes what one is, the project explores human evolution by examining similarities – known as “homology” – between genes from human beings and a target organism, in this case the rice plant.

CREATIVE & SCIENTIFIC CONSULTING

For Recoding Innovation an NSF sponsored film series dedicated to exploring the ways in which ethical considerations positively drive innovations in scientific discovery.

THE TRAJECTORY OF FORGETTING

An interactive installation exploring the nature of memory, its creation, erasure, and transformation, in an interplay between genotype and phenotype, and its centrality to our construct of Self and consciousness.

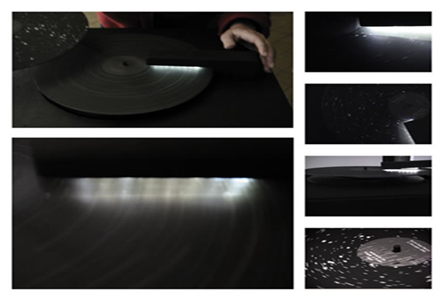

STARS

A work that reflects upon the intersection of gender and the history of science. The sound installation transforms astronomical data, digitally imaged on to 12″ vinyl LPs, into unique tonal compositions reminiscent of radio astronomy recordings of pulsars. The strange yet somehow familiar music mediates the relationship of the data to the history of its production, as each LP contains the full-hemisphere astronomical data for the birth or death for each of several women members of the Harvard College Observatory, collectively known as “The Harvard Computers” who collectively are responsible for developing the schema used for classifying stars by their spectra and cataloging and categorising the majority of stellar data used as the basis for astromical maps.

DREAMSPACE FRAGMENTS

An exploration into generating representations of the narrative content of dreams as virtual environments, or “dreamspaces.” Using a derivative of the classification system for dream content analyses developed by Calvin Hall I encoded the narrative content of dreams into numeric values which were then used to define the parameters for generating 3-dimensional objects that are rendered as VRML worlds.

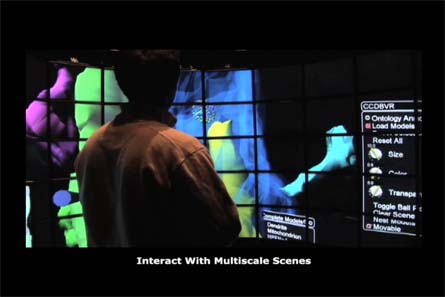

MULTISCALE DATA EXPLORATION

Ongoing collaborative research to develop systems for real-time visualization and interaction with multi-modal data representing very large and high-dimensional datasets (2D, 3D, and 4D) within immersive environments utilizing ultra-high resolution displays connected by high-bandwidth low-latency networks to facilitate distributed collaboration. This research integrates ultra-high resolution tiled displays, computer grahics and visualization, interatctive technologies and multi-modal, multi-resolution imaging data of biological systems. Key collaborators: Iman Mostafavi, Dr. Jurgen Schulze, Raj Singh, in addition to NCMIR, Calit2 and EVL researchers.

MIXED-MEDIA WORKS ON CANVAS AND PAPER

An exploration of the potential for transformation of consciousness through visual experience via the creation of images that are neither representational nor interpretive– images whose sole content is the act of seeing.

Timen = INSPIRATION²

Explores the relationship between time and money, the selling of human time for money through labor, and the alternative – purchasing time in continuous or asynchronos multiple dimensions. It is an online experience where individuals create an alternate identity and go on a shopping spree for time. In collaboration with Ingo Tributh.

PATH OF SILENCE

This project highlight page shows preliminary studies for an installation that would combine real-time video from 11 different labyrinths throughout the world to form a virtual 11-circuit labyrinth, thereby establishing a collective sacred space that can be experienced by multiple participants as a single labyrinth.